By Jeff DiBattista, DIALOG

It’s no secret that the world around us, because of the technology we create, is changing at a breathtaking pace. In fact, this change is so far from being a secret—so present in our personal and professional lives—that many of us wish we didn’t have to confront it so often. From smartphones to smart houses, from Uber to autonomous vehicles, from Siri to Alexa, we are immersed in new technologies constantly. And while experiencing new technologies can be exciting (especially for people younger than I am!) for others of us it can be unnerving, overwhelming, or confusing. But it need not be, if we understand the trends behind the changes. I believe it’s important to seek out the patterns behind the technological changes surrounding us, and to engage with those developments, rather than allowing our fears to paralyze us into inaction.

So, I’m writing to share some of my observations and thoughts on these technological trends, in the hope they will help you and your organization take full advantage of them. But first, full disclosure: I’m not a computer scientist. I haven’t even done any programming since I left university 20 years ago. I’m a structural engineer (who loves to design in steel) and a business owner who has simply been working to develop my understanding of technological change. The goal I keep in mind is to help my company, DIALOG, be a leader in a changing world. I fundamentally believe that we should see the rapid progression of technology as an opportunity, not a threat. Instead of standing still and allowing the technological change in the world to disrupt us, let us embrace new technologies and use them to strengthen our businesses, to enable our employees, and to meaningfully improve the wellbeing of our communities. Let us not be among those disrupted by technological change—let us be the disruptor!

From chips to Crays to crazy fast

Figure 1 Gordon E. Moore, “Cramming More Components onto Integrated Circuits,” Electronics, pp. 114–117, April 19, 1965. |

Before we can look forward to the emerging trends in technology, we must look back to gain context. Many of you have probably heard of “Moore’s Law” in computing, but few people know its actual origins. Way back in 1965, Gordon Moore—co-founder of Intel—observed a trend with integrated circuits, which are otherwise known as computer chips. Year over year, starting in 1959, Moore mapped out the number of transistors that could fit onto a single computer chip. He observed that technological progress was enabling the number of transistors on a single chip to double every year or two: One transistor in 1959; eight transistors (2x2x2) by 1962; thirty-two transistors (2x2x2x2x2x2) by 1964; and so on. This rate of change, plotted by year, appears as a straight line on a logarithmic graph—the essence of Moore’s Law (Figure 1). And, with great foresight, Moore extended a dashed line from his data and predicted that the doubling trend would continue for years into the future. Ever since, the persistent developments of technology have consistently met the expectations of this trend—from a single transistor on a chip back in 1959 to chips today that contain tens of billions of transistors. If I were a betting man (and I am), I’d wager that the exponential increase we’ve seen in computing power will continue well into the future. The real question for us is: What will that computing power be able to do?

Figure 2 The Cray 2 supercomputer in 1985…roughly the same capability as an already outdated Apple iPhone 4. |

So that we can wrap our heads around what Moore’s Law actually means in our lives, let’s look at a few examples. Way back in 1985, when I was just a gangly teenager, the Cray 2 was the leading supercomputer in the world. It had a computing power of about 1.9 gigaFLOPS (1.9 billion floating point operations per second). In other words, it could do billions of tiny math calculations every second. The Cray 2 cost millions of dollars, weighed about four tons, and needed a 200-gallon tank for cooling fluid. But by 2010—only 25 years later—the Apple iPhone 4 had a roughly equivalent computational capability, cost less than $1000, and could fit in your shirt pocket. That’s the surprising power of Moore’s Law.

Let’s consider an example even closer to our everyday lives. Think about the smartphone in your pocket right now and compare it to the phone you had ten years ago. In 2008 I had a BlackBerry 8800 with a weird little trackball instead of a touch screen. Some of my colleagues had the first model of the iPhone, which had just been released the previous year. Today, my Samsung Galaxy S8—which is a bit out of date, since it was released over a year ago—has about 1000 times more computing power and 1000 times more memory than that old BlackBerry 8800. If this rate of technological progress continues for the next 10 years—and 25 years—what power will we carry with us in our pockets then?

Tech today

We are all carrying around today little supercomputers in our pockets, supercomputers that are wirelessly connected to endless numbers of other supercomputers. Together, those supercomputers are changing our lives and the lives of our children and the lives of our colleagues. Think about how much your smartphone has changed your life already. For example, smartphones have enabled new business models like Uber and Lyft to radically disrupt personal transportation. Push a button on your smartphone and a driver will pick you up, usually within five minutes, more reliably, more cost effectively, and with less payment hassle than a taxi. Uber is so convenient and affordable, that I’ve concluded that I don’t need to own a car anymore, and I can eliminate all the extra costs that come with owning a vehicle. (For the record, I don’t own any Uber shares, and am not on their payroll.) Right now, as I write, my car is listed for sale on AutoTrader and Kijiji. I just don’t need it anymore, thanks to Uber. (It’s a 2015 Toyota Camry Hybrid XLE with low miles. Make me an offer!)

Figure 3 Autonomous cars are a common sight these days in cities like San Francisco. |

But ride-sharing apps are just the starting point. Uber and many other players are investing billions to develop autonomous vehicles—putting at risk the jobs of millions of drivers. While here in Canada the thought of driverless cars on our streets may seem like a far-off dream, autonomous vehicles are already driving the streets in cities like Phoenix and San Francisco (Figure 3). These technologies are being enabled by the continuing development of smaller, faster, cheaper computing power coupled with more advanced sensors. And these smaller, faster, cheaper computers are also enabling another trend: the exponential improvement of machine learning and artificial intelligence, or “AI”. These are, in other words, computers that teach themselves by recognizing patterns in data.

Applying machine learning and artificial intelligence to work that has traditionally been done solely by humans is the next industrial revolution. As an example of the speed of progress, in November 2016 Google replaced the engine of their traditional online Translate service with an AI “brain”; at first, the AI translator made a lot of mistakes, but it learned remarkably quickly. Within weeks the AI translator was working at a level nearly on par with human translators.[1] Building on that success, by October 2017 the AI engine allowed Google to translate in real time through its Pixel earbuds.[2] Two people can have a conversation using different languages, and Google’s AI engine translates in real time. More recently, Google demonstrated its new AI Assistant, which learns and responds so effectively that it sounds like an entirely a human conversation: while making a live phone call, the Assistant talked to a person who couldn’t tell that they were speaking with a computer.[3] The ability of the Assistant to have a human conversation was amazing, to the point of being a bit disturbing: How long will it be before having natural conversations with computers becomes normal?

Other examples of AI-driven technological progress are emerging daily. Researchers in Singapore recently created a robot that can devise and execute a plan to put together an Ikea chair in just over 20 minutes, starting from a loose pile of parts.[4] (Still not as fast as me, if I force myself to read the instructions first!) In 2017, an AI-driven computer from Carnegie Mellon won a 20-day poker tournament, learning how to bluff and outsmarting some of the world’s top poker players.[5] And, as of June 2018, the most powerful supercomputer in the world is at Oak Ridge National Laboratory in Tennessee, reclaiming the title from China. It’s capable of performing at 200 petaFLOPS: 200 million billion calculations per second.[6] While this is only two-tenths as fast as the estimated capacity of the human brain, if current technological trends continue then we might expect that in the next 25 years you will be able to buy a computer that fits in your pocket, costs less than $1000, and has the computing power of the human brain. What will that technology be able to do for us? And are we prepared for this emerging reality?

The Steel Industry: AI warriors, or worriers?

Similar to autonomous cars, heavy investments are being made into developing long-haul autonomous trucks that will someday threaten the jobs of millions of truck drivers.[7] This investment is happening close to home here in Canada, too: This summer, Imperial Oil starting testing driverless oilsands haulers at their Kearl site in Alberta.[8] With these developments happening in our own backyard, the relevance to the steel industry of technological shifts will assuredly continue to grow. What happens to those people and their livelihoods? The big risk is that a fundamental assumption of macroeconomics—that as technology eliminates jobs, people can re-train for new jobs—isn’t necessarily valid anymore, because the technological changes are coming at us too quickly.

As many other sectors accelerate, construction has had the lowest productivity gains of any industry: incredibly, in America construction productivity has actually fallen by half since the late 1960s.[9] This is partly because workers are used more than machinery. The Economist notes that the construction industry “mostly ignores tools that might improve productivity.”[10] The McKinsey Global Institute looked at the state of digitization in sectors across the U.S. economy, analyzing digital assets, usage, and labour. In their results, construction is ranked second-lowest only to agriculture and hunting.[11]

We should not take comfort from the fact that it seems that employees of the construction industry aren’t being ousted by AI as rapidly as workers in other sectors. Rather, we must recognize the risk that the construction industry is falling dangerously behind in a fast-paced world—and any industry that falls behind becomes a primary target for disruption. Why? Because industries that do not optimize themselves are a juicy, profitable target for the implementation of game-changing technologies. Netflix destroyed Blockbuster. iTunes and Spotify bankrupted HMV. Uber is overwhelming the taxi business. Who is going to disrupt the construction industry?

Self-disruption, not self-destruction

Who can disrupt the structural steel industry? We can.

There is no one better equipped to disrupt our industry than ourselves. We already have the supply chains, the shop space, the CNC equipment, and the people with decades of steel know-how. We just need to inject some new thinking. We just need to open our minds to change. We just need to systematically challenge and digitize the way we get things done in every corner of our industry, from designers to steel mills to service centres to fabricators to erectors.

Where are we going to get the injection of new thinking that will help us disrupt ourselves? There are opportunities everywhere. Share learnings from each other through the CISC. Build relationships with universities, like the CISC Steel Centre at the University of Alberta (check out www.steelcentre.ca). Hire co-op students, not just from engineering, but from computer science. Poll your own staff to see who has a background in technology—you’ll probably be surprised to see the tech talent you already have on your team. And it’s not just about technology: It’s about lean process; it’s about prefabrication; it’s about measuring and tracking data. Taken in combination, these are powerful tools that will allow the steel industry to disrupt itself.

Figure 4 Hackathons have become regular events in the DIALOG studios, where people learn the power of programming to develop tools to optimize their own work. |

Steel yourself!

I fundamentally believe that we should see the rapid progression of technology as an opportunity, not a threat. Technology will allow us to automate many menial tasks, allowing our talented people to focus more of their energies on creative, high-value work for our clients and customers. I’ve been challenging the people I work with to see the tremendous opportunity that lies before us, and to open our minds to embrace technological change. Now, I’m challenging you. Though it won’t be easy at first, let us embrace new technologies and use them to strengthen our businesses, to enable our employees, and to meaningfully improve the wellbeing of our communities. There is a lot to be excited about—we can learn from the world around us and we can find support in our environments of teamwork. Together, let’s disrupt the steel industry for the better!

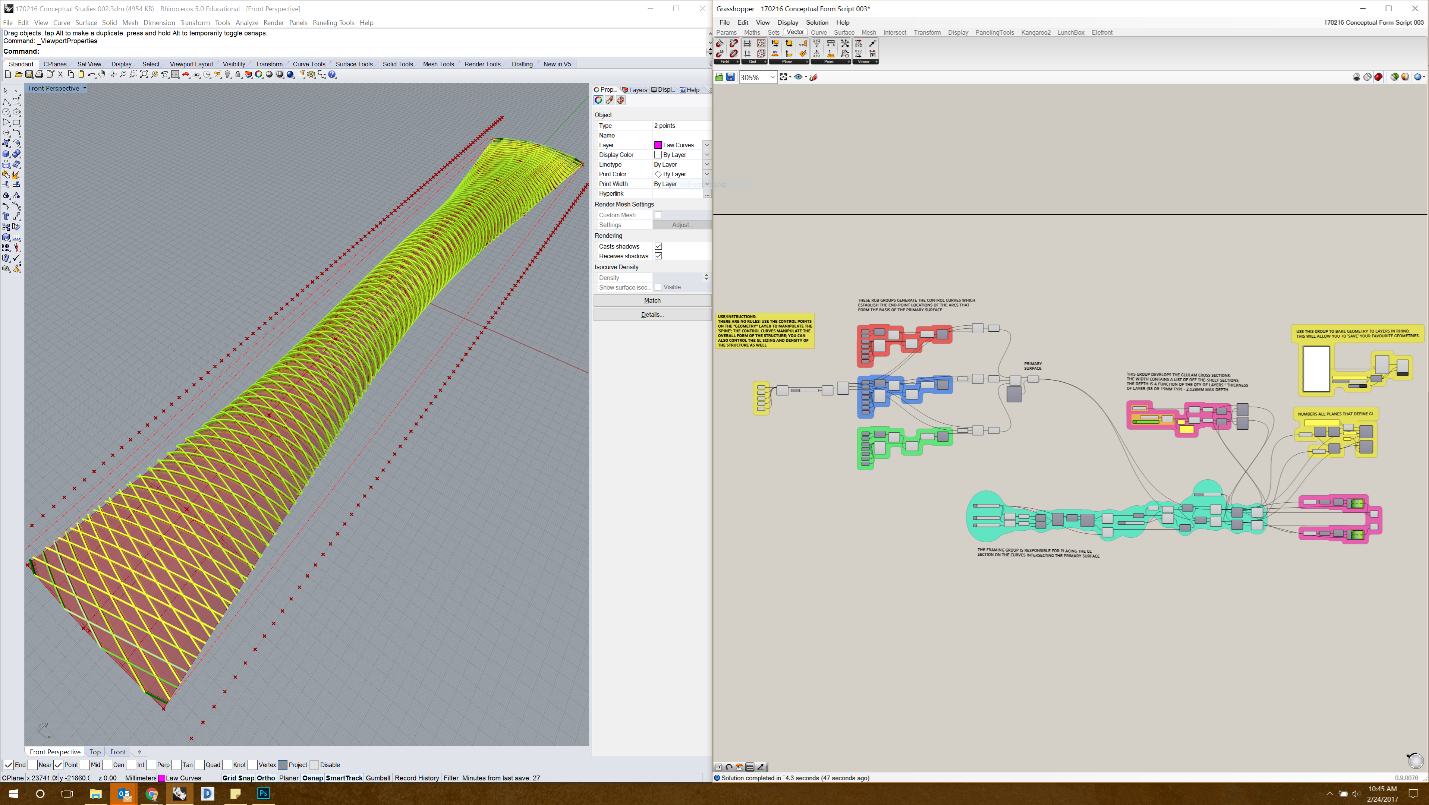

Figure 5 Computational design in action, disrupting traditional approaches to design and drafting. This tool parametrically generates structural framing on complex surfaces.

[1] https://www.nytimes.com/2016/12/14/magazine/the-great-ai-awakening.html

[2] https://www.engadget.com/2017/10/04/google-pixel-buds-translation-change-the-world/

[3] https://www.youtube.com/watch?v=D5VN56jQMWM

[4] https://www.nytimes.com/2018/04/18/science/robots-ikea-furniture.html

[5] http://time.com/4656011/artificial-intelligence-ai-poker-tournament-libratus-cmu/

[6] https://www.technologyreview.com/s/611077/the-worlds-most-powerful-supercomputer-is-tailor-made-for-the-ai-era/

[7] https://www.nytimes.com/2017/01/25/business/dealbook/how-efficiency-is-wiping-out-the-middle-class.html

[8] http://www.mining.com/web/imperial-testing-driverless-oilsands-haulers-kearl/

[9] https://www.economist.com/business/2017/08/17/efficiency-eludes-the-construction-industry

[10] https://www.economist.com/business/2017/08/17/efficiency-eludes-the-construction-industry

[11] https://hbr.org/2016/04/a-chart-that-shows-which-industries-are-the-most-digital-and-why

[12] https://www.forbes.com/sites/tomdavenport/2018/02/08/shining-up-a-rusty-industry-with-artificial-intelligence/#e2ff77c61c43